Nadja Pernat is a trained biologist, interested in how anthropogenic changes and activities influence biodiversity. She works in the Animal Ecology Lab at the Institute of Landscape Ecology at the University of Münster. Her doctoral thesis was about the citizen science project “Mosquito atlas” and how citizen science-generated data can be used for research.

Can you tell me a bit about your work in citizen science and the projects you are involved in?

I am a trained biologist that went into science communication and back to research. After university, I was an editor in a company doing school books for German high schools, then I created travelling exhibitions for the Max Planck Society and was on the team for the “Beyond the lab” citizen science exhibition at the Science Museum in London. Back in science, I completed my doctoral thesis at the Leibniz Centre for Agricultural Landscape Research, ZALF in Müncheberg. My thesis was about the citizen science project “Mosquito atlas” (‘Mückenatlas’) and how the randomly collected data by citizens can be used for research.

Citizen science data is my favourite topic. I am particularly interested in how anthropogenic changes and activities influence biodiversity and for that I use citizen science data. After my time as a doctoral researcher, I started a postdoc position at the University of Münster in March 2022.

After having finished your work on the mosquito atlas, how do you plan to continue working with citizen science-generated data?

One field I am interested in is called ‘iEcology’. iEcology uses big data from online platforms, not only citizen science platforms but also other webpages, social media, everything that contains images, text, etc. to find out if the data out there can contribute to the global biodiversity collection of data and if ecological research can be done with it. I am also teaching at the university and would like to create lectures on data and species literacy, and show students how to use big data platforms such as GBIF as well as species identification apps to teach themselves species literacy.

This sounds fascinating! I wanted to learn more about your work because you are a user of the Pl@ntNet-API, one of several technological services that the Cos4Cloud project is developing. Could you tell me how you started using the Pl@ntNet-API?

During my time at the ZALF I became part of a network, the so-called ‘coding club’, which was founded out of a EU COST action called Alien CSI. The coding club started after a workshop on citizen science and ecological interactions within this COST action, which also resulted in a paper on that topic (“Species interactions: next-level citizen science”). I used the Pl@ntNet-API in the framework of the coding club. With citizen science you usually have primary observations, that is, people take a picture or report an occurrence of a species. But people also incidentally collect interaction data on what the species they observe is doing: Does the insect sit on a plant? In the case of mosquitoes, is it biting?

I was specifically interested in plant-pollinator interactions caught on images provided by citizens to citizen science platforms like iNaturalist. But how could I identify the plants an insect is sitting on from a picture?

I am not a botanist and do not have the skills for plant identification so I wanted to use some application with image recognition to help. It had to be possible to develop an automatic workflow to do that since I wanted to avoid manually downloading the pictures from iNaturalist and then using the Pl@ntNet app for each individual photograph to identify a potential plant on it. Since the COST action’s coding club is about invasive species I looked for a proper non-native species that we did not know a lot about and would function as our research subject. The coding club members came up with a species of wasp not native to Europe called Isodontia mexicana (the Mexican grass-carrying wasp). This species was perfect because there were a lot of iNaturalist pictures but not much literature. During a common hackathon in Romania at the beginning of this year (2022) I developed the automatic workflow.

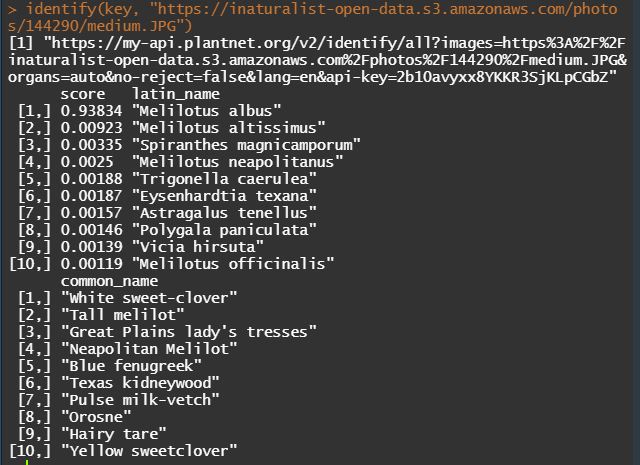

The Pl@ntNet app, which I use personally to identify plants outside, is available as Pl@ntNet-API. Luckily, I came across the plantnet R package to communicate with the Pl@ntNet-API via R (added: R packages are extensions to the R statistical programming language) that Tom August, who is also part of the coding club, had developed. This was great because I do work with the programme R and with Tom’s package I didn’t have to access the Pl@ntNet-API directly, which I hadn’t mastered at that time. In short, for the automated workflow I retrieved all the pictures URLs of Isodontia mexicana from iNaturalist with a specific R package – there are a lot of R packages out there to communicate with the iNaturalist API as well – and fed them into the Pl@ntNet-API with the help of the plantnet R package. For every image, the app outputs a list of candidate species of plants potentially shown in the picture together with the wasp.

How did both the Pl@ntNet-API and the algorithm do? What do you think about their performance?

The API worked perfectly well. The Pl@ntNet team was very helpful. I had a problem with the API because it automatically rejected pictures that did not contain a plant. As far as I understood, this is for protection of personal privacy. The Pl@ntNet team helped me to overcome this issue by adapting their API accordingly and adding an additional parameter which Tom August and I could use to fix the problem. That was amazing.

In terms of performance of Pl@ntNet, I compared the accuracy of the app to identify the plants on the iNaturalist pictures with that of experts. I gave expert botanists 250 iNaturalist URLs of the same Isodontia mexicana pictures that I ran through Pl@ntNet, but the botanists did not know about the suggestions of the Pl@ntNet app and their scores. (For context: after a user uploads a plant photo to the app, the service returns a list of species and the likelihood (score) of the image being species ‘x’ or ‘y’.)

The whole endeavour was to find out at what score threshold the workflow was reliable. To assess this, I compared the identifications by the experts with the first (highest) score on the app, meaning, I looked if the plant identifications of the experts and Pl@ntNet were in agreement.

As it turned out, the score has to be over 80% or 0.8 to be reliable, at least for the identification of species, provided that the experts are correct. For genera and families it is around 0.6. However, the interesting thing is that the experts also had difficulties in identifying the plant species, which is absolutely understandable since most of the pictures only showed tiny sections of flowers or leaves of plants from all over the world.

Overall, in this preliminary test the app performed pretty good. For a first exploration of an introduced species or a species you do not know much about, the workflow is quite useful to generate research questions. The preprint of this study (“An iNaturalist-Pl@ntNet-workflow to identify plant-pollinator interactions – a case study of Isodontia mexicana”) has been published online on a preprint server.

Have you continued using the Pl@ntNet-API since then?

Yes, I have and I will continue to do so because the study is only a preprint at this stage and we will need to identify the plants on the remaining 1.250 wasp pictures. Then, I will repeat the analysis to get more accurate score thresholds. I will definitely continue to use the Pl@ntNet-API because I am interested in other (invasive) species and we have to test the workflow with a lot of species to see if it works for other insects and interactions.

Is there something else that you would like to share with me about the Pl@ntNet-API and Pl@ntNet?

It was one of the first times I used an API. There is a review from 2020 by Hamlyn G. Jones (“What plant is that? Tests of automated image recognition apps for plant identification on plants from the British flora”) where he assessed different plant identification apps and only two of them are available as API. In times of open access and open science it is very important that the data is accessible for everyone to use. Since all plant identification apps benefit from people’s data, data generated through citizen science, this would be a natural course of action.

Pl@ntNet app can digest more than one picture. You can upload flowers and leaves from the same plant and the algorithm gives you better suggestions if you have more than one picture. The review article points that out as well and that Pl@ntNet is one of the few apps to offer this feature.

Visit the website of the COST action Alien CSI to learn more about their work on understanding of alien species through citizen science.

To learn more about Cos4Cloud’s services for citizen science, visit the project’s services description.